Month: December 2017

A recent report from Forrester found that 57 percent of marketers find it difficult to give their stakeholders in different functions access to their data and insights.

Some of Google Inc.’s products and services are already pretty good at dealing with queries spoken in plain English. We no longer have to type our searches into Google, for example, we can just ask for it and get the answers we need. We can also tell our smartphones to find the fastest route to our destination while traveling and they will quickly display a map with GPS instructions.

Now, the company is bringing this natural-language ability to its Google Analytics service. As of Tuesday, Google Analytics users can say rather than type queries such as “new users from January 1 to May 7” and the service will immediately display that information. It can also handle more advanced questions, throwing up various charts and graphs offering visual displays for all kinds of web traffic metrics.

In order to use Google Analytics’ new natural voice capabilities, users click on the Intelligence button that opens up a side panel. In the mobile app for Android and iOS, there’s a new Intelligence icon in the upper right-hand corner that can be clicked on to activate the feature.

Google is adding natural language queries as part of a new set of Analysis Intelligence tools that leverage machine learning systems to help users better understand their website data. Google said it added the new feature after realizing that even some of the most basic information in its Analytics tools was not easily discoverable for many users.

“We’ve talked to web analysts who say they spend half their time answering basic analytics questions for other people in their organization,” said Anissa Alusi, a Google Analytics product manager, in a blog post. “In fact, a recent report from Forrester found that 57 percent of marketers find it difficult to give their stakeholders in different functions access to their data and insights. Asking questions in Analytics Intelligence can help everyone get their answers directly in the product.”

Some of the other Analysis Intelligence tools include automated insights, smart goals, smart lists and session quality. With regard to the automated insights, this will deliver more information to mobile users that was previously only available to desktop users, Google said.

The company said the new features, including natural language processing, are being rolled out now and will be available to users all over the world within the next few weeks. Here’s a short video from Google demonstrating how it works:

It is almost here…the time of year I love so much… #CES

There is nothing better than starting off the new year in Vegas with 600 startups and over 4,000 exhibitors crammed in to 2.6 million net square feet. This January will mark my 20th year attending CES. I look forward to connecting with friends and hearing what has you excited for 2018 as I look to compile my annual recap.

Look for some exciting news coming from my team as well because, you know, we couldn’t let you get ALL the limelight.

2018 is going to be great! Hit me up and let’s connect @mybuddypeted or pete@thebuddygroup.com

Internet of Things (IoT) market is growing by 15% annually.

Worldwide spending on the Internet of Things is forecast to reach $772.5 billion in 2018, an increase of 15 percent over the $674 billion that will be spent in 2017, according to a new report by International Data Corp.

IDC forecasts worldwide IoT spending to sustain a compound annual growth rate (CAGR) of 14 percent through the 2017-2021 forecast period, surpassing the $1 trillion mark in 2020 and reaching $1.1 trillion in 2021.

IoT hardware will be the largest technology category in 2018, with $239 billion going largely toward modules and sensors along with some spending on infrastructure and security. Services will be the second largest technology category, IDC said, followed by software and connectivity.

Software spending will be led by application software along with analytics software, IoT platforms, and security software. Software will also be the fastest growing technology segment, with a five-year CAGR of 16.1 percent.

Services spending will also grow at a faster rate than overall spending, with a CAGR of 15.1 percent. It will nearly equal hardware spending by the end of the forecast, the report said.

“By 2021, more than 55 percent of spending on IoT projects will be for software and services,” said Carrie MacGillivray, vice president, Internet of Things and mobility at IDC. “Software creates the foundation upon which IoT applications and use cases can be realized.”

https://www.information-management.com/news/internet-of-things-market-growing-by-15-percent-annually

Google Lens is far from perfect. But it’s much much better than Google Goggles ever was!

Google Lens has gone live or is about to on Pixel phones in the US, the UK, Australia, Canada, India and Singapore (in English). Over the past couple of weeks, I’ve been using it extensively and have had mostly positive results — though not always.

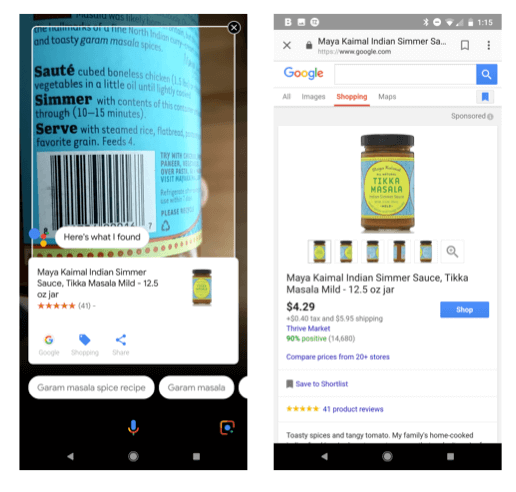

Currently, Lens can read text (e.g., business cards), identify buildings and landmarks (sometimes), provide information on artwork, books and movies (from a poster) and scan barcodes. It can also identify products (much of the time) and capture and keep (in Google Keep) handwritten notes, though it doesn’t turn them into text.

To use Lens, you tap the icon in the lower right of the screen when Google Assistant is invoked. Then you tap the image or object or part of an object you want to scan.

As a barcode scanner, it works nearly every time. In that regard, it’s worthy and a more versatile substitute for Amazon’s app and just as fast or faster in many cases. If there’s no available barcode, it can often correctly identify products from their packaging or labels. It also does very well identifying famous works of art and books.

Google Lens struggled most with buildings and with products that didn’t have any labeling on them. For example (below), it was rather embarrassingly unable to identify an Apple laptop as a computer, and it misidentified Google Home as “aluminum foil.”

When Lens gets it wrong it asks you to let it know. And when it’s uncertain but you affirm its guess, you can get good information.

I tried Lens on numerous well-known buildings in New York, and it was rarely able to identify them. For example, the three buildings below (left to right) are New York City Hall, the World Trade Center and the Oculus transportation hub. (In the first case, if you’re thinking, he tapped the tree and not the building, I took multiple pictures from different angles, and it didn’t get one right.)

I also took lots of pictures of random objects (articles of clothing, shoes, money) and those searches were a bit hit-and-miss, though often, when it missed it was a near-miss.

As these results indicate, Google Lens is far from perfect. But it’s much much better than Google Goggles ever was, and it will improve over time. Google will also add capabilities that expand use cases.

It’s best right now for very specific uses, which Google tries to point out in its blog post. One of the absolute best uses is capturing business cards and turning them into contacts on your phone.

Assuming that Google is committed to Lens and continues investing in it, over time it could become a widely adopted alternative to traditional mobile and voice search. It might eventually also drive considerable mobile commerce.